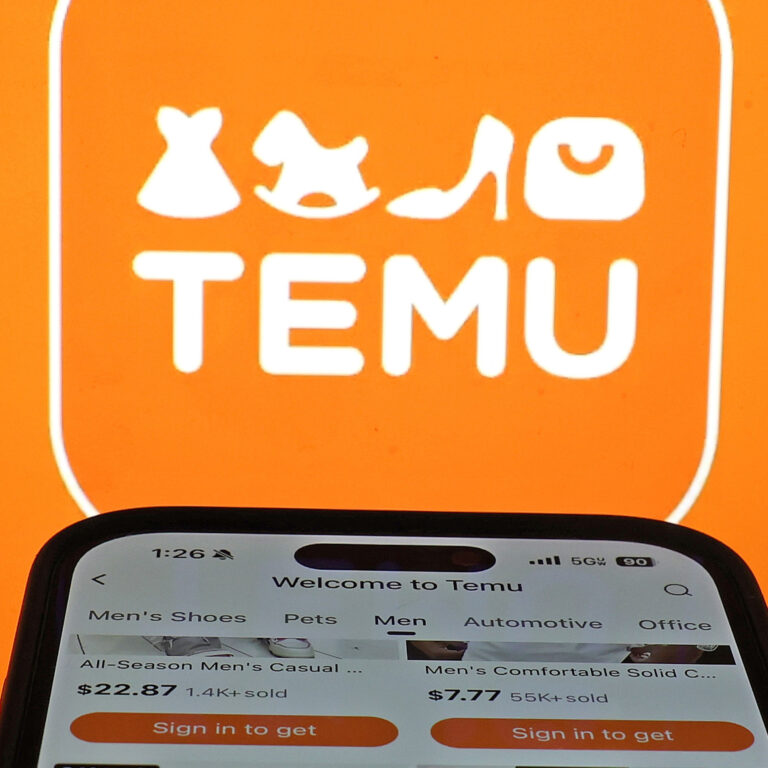

It is rare that a new e-commerce company has such a meteoric rise as Temu. The company, which launched in the fall of 2022, has been flooding the American advertising market, buying much of the inventory of Facebook, Snapchat, and beyond. According to the market intelligence firm Sensor Tower, Temu is one of the most downloaded iPhone apps in the country, with around 50 million monthly active users.

On today’s show, we go deep on Temu: How does it work, how did it manage such a quick rise in the U.S., and what hints might it offer us about the future of retail? Plus, we’ll talk to the bicycle-loving U.S. Representative who is working to shut down a loophole that has proved very helpful to Temu’s swift ascent.

This episode was hosted by Nick Fountain and Alexi Horowitz-Ghazi with reporting from Emily Feng. It was produced by Sam Yellowhorse Kesler and Emma Peaslee. It was edited by Keith Romer, fact-checked by Sierra Juarez, and engineered by Cena Loffredo. Alex Goldmark is Planet Money’s executive producer.

Help support Planet Money and get bonus episodes by subscribing to Planet Money+ in Apple Podcasts or at plus.npr.org/planetmoney.

See pcm.adswizz.com for information about our collection and use of personal data for sponsorship and to manage your podcast sponsorship preferences.